Performance measurement

Capability versus capacityedit

Supercomputers generally aim for the maximum in capability computing rather than capacity computing. Capability computing is typically thought of as using the maximum computing power to solve a single large problem in the shortest amount of time. Often a capability system is able to solve a problem of a size or complexity that no other computer can, e.g., a very complex weather simulation application.

Capacity computing, in contrast, is typically thought of as using efficient cost-effective computing power to solve a few somewhat large problems or many small problems. Architectures that lend themselves to supporting many users for routine everyday tasks may have a lot of capacity but are not typically considered supercomputers, given that they do not solve a single very complex problem.

Performance metricsedit

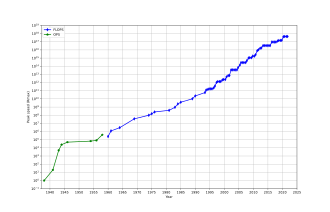

In general, the speed of supercomputers is measured and benchmarked in FLOPS ("floating-point operations per second"), and not in terms of MIPS ("million instructions per second), as is the case with general-purpose computers. These measurements are commonly used with an SI prefix such as tera-, combined into the shorthand "TFLOPS" (1012 FLOPS, pronounced teraflops), or peta-, combined into the shorthand "PFLOPS" (1015 FLOPS, pronounced petaflops.) "Petascale" supercomputers can process one quadrillion (1015) (1000 trillion) FLOPS. Exascale is computing performance in the exaFLOPS (EFLOPS) range. An EFLOPS is one quintillion (1018) FLOPS (one million TFLOPS).

No single number can reflect the overall performance of a computer system, yet the goal of the Linpack benchmark is to approximate how fast the computer solves numerical problems and it is widely used in the industry. The FLOPS measurement is either quoted based on the theoretical floating point performance of a processor (derived from manufacturer's processor specifications and shown as "Rpeak" in the TOP500 lists), which is generally unachievable when running real workloads, or the achievable throughput, derived from the LINPACK benchmarks and shown as "Rmax" in the TOP500 list. The LINPACK benchmark typically performs LU decomposition of a large matrix. The LINPACK performance gives some indication of performance for some real-world problems, but does not necessarily match the processing requirements of many other supercomputer workloads, which for example may require more memory bandwidth, or may require better integer computing performance, or may need a high performance I/O system to achieve high levels of performance.

The TOP500 listedit

Since 1993, the fastest supercomputers have been ranked on the TOP500 list according to their LINPACK benchmark results. The list does not claim to be unbiased or definitive, but it is a widely cited current definition of the "fastest" supercomputer available at any given time.

This is a recent list of the computers which appeared at the top of the TOP500 list, and the "Peak speed" is given as the "Rmax" rating. In 2018, Lenovo became the world's largest provider for the TOP500 supercomputers with 117 units produced.

| Year | Supercomputer | Rmax (TFlop/s) |

Location |

|---|---|---|---|

| 2020 | Fujitsu Fugaku | 415,530.0 | Kobe, Japan |

| 2018 | IBM Summit | 148,600.0 | Oak Ridge, U.S. |

| 2018 | IBM/Nvidia/Mellanox Sierra | 94,640.0 | Livermore, U.S. |

| 2016 | Sunway TaihuLight | 93,014.6 | Wuxi, China |

| 2013 | NUDT Tianhe-2 | 61,444.5 | Guangzhou, China |

| 2019 | Dell Frontera | 23,516.4 | Austin, U.S. |

| 2012 | Cray/HPE Piz Daint | 21,230.0 | Lugano, Switzerland |

| 2015 | Cray/HPE Trinity | 20,158.7 | New Mexico, U.S. |

| 2018 | Fujitsu ABCI | 19,880.0 | Tokyo, Japan |

| 2018 | Lenovo SuperMUC-NG | 19,476.6 | Garching, Germany |

Comments

Post a Comment